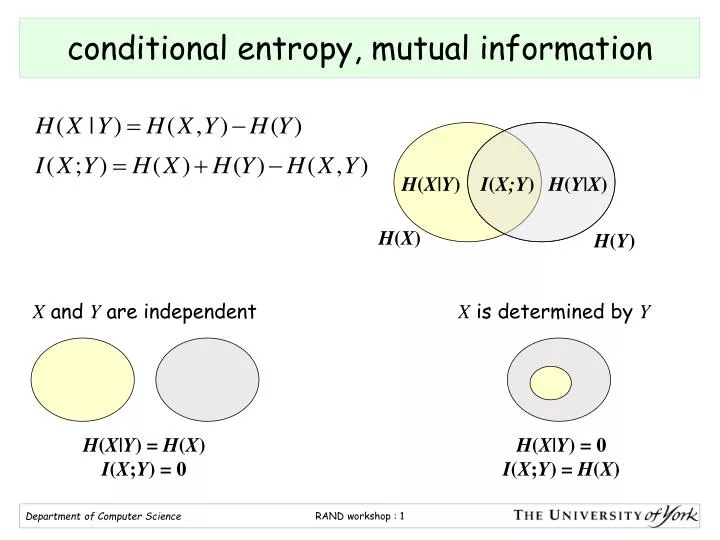

It reveals how much variability remains in y, when x is fixed. Using the Baysian sum rule p( x y) = p( x∣ y) p( y), one finds that the conditional entropy is equal to H(X|Y) = H(X,Y) - H(Y) with "H(XY)" the joint entropy of "X" and "Y". The conditional entropy is the entropy of the conditional probability. We introduce conditional Huffman encoding of DCT run-length events to improve the coding efficiency of low- and medium-bit rate video compression algorithms. We will interchangeably use H(p) or H(X), where pis the probability distribution of X. The entropy of a discrete random variable Xin base 2 (bits) will be denoted by H(X). The conditional entropy is just the Shannon entropy with p( x∣ y) replacing p( x), and then we average it over all possible "Y". Calculate the Shannon entropy/relative entropy of given distribution (s). (1 x) will denote the binary entropy function with the convention that 0log 2 (0) 0. In Baysian language, Y represents our prior information information about X. Image:classinfo.png If the probability that X = x is denoted by p( x), then we donote by p( x∣ y) the probability that X = x, given that we already know that Y = y. It is referred to as the entropy of X conditional on Y, and is written H( X∣ Y). Yes, I think I understand the conditional entropy correctly, however, I find it a bit awkward with two 'conditional variables', though. The present study takes a step toward addressing this issue by introducing conditional entropy (CE) as a potential electroencephalography (EEG)-based. I'm at a lost as to even know where to begin. Show Eh(XY) h(X) E h ( X Y) h ( X) where h(X) h ( X) is the entropy of X X. Eh(XY) j i pij lnpijqj E h ( X Y) j i p i j ln p i j q j. The conditional entropy measures how much entropy a random variable X has remaining if we have already learned the value of a second random variable Y. Conditional Entropy (contd.) The conditional entropy is the expected value for the entropy of pYXxi: HYX HYXxi. Then the conditional entropy is defined as.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed